Avoiding Randomization Failure in Program Evaluation

Gary King, Richard Nielsen, Carter Coberley, James Pope, Aaron Wells. 2011.

"Avoiding Randomization Failure in Program Evaluation".

Population Health Management, 14, 1_suppl, Pp. S11-S22.

Abstract

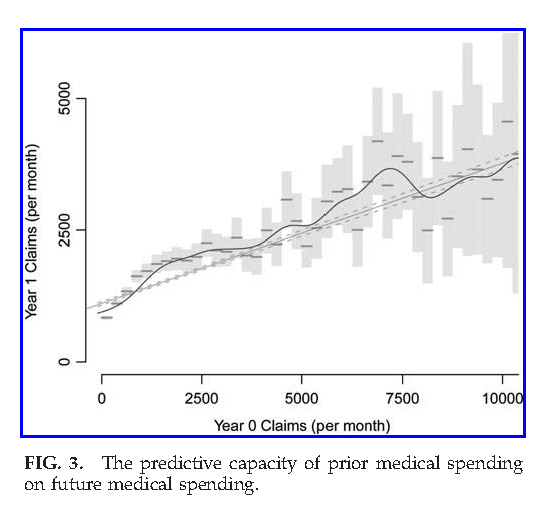

We highlight common problems in the application of random treatment assignment in large scale program evaluation. Random assignment is the defining feature of modern experimental design. Yet, errors in design, implementation, and analysis often result in real world applications not benefiting from the advantages of randomization. The errors we highlight cover the control of variability, levels of randomization, size of treatment arms, and power to detect causal effects, as well as the many problems that commonly lead to post-treatment bias. We illustrate with an application to the Medicare Health Support evaluation, including recommendations for improving the design and analysis of this and other large scale randomized experiments.

See Also

- [Book] The Effect of War on the Supreme Court (2006)

- [Paper] The Supreme Court During Crisis: How War Affects only Non-War Cases (2005)

- [Paper] Deaths From Heart Failure: Using Coarsened Exact Matching to Correct Cause of Death Statistics (2010)

- [Paper] Experimental Evidence on the (Limited) Influence of Reputable Media Outlets (2025)

- [Paper] Methods for Extremely Large Scale Media Experiments and Observational Studies (Poster) (2014)

- [Presentation] Public Policy for the Poor? A Randomized Evaluation of the Mexican Universal Health Insurance Program (Harvard School of Public Health) (2022)

- [Paper] Letter to the Editor on the 'Medicare Health Support Pilot Program' (by McCall and Cromwell) (2012)

- [Paper] Comparative Effectiveness of Matching Methods for Causal Inference (2011)